Credit Risk Scoring & Loan Default Prediction

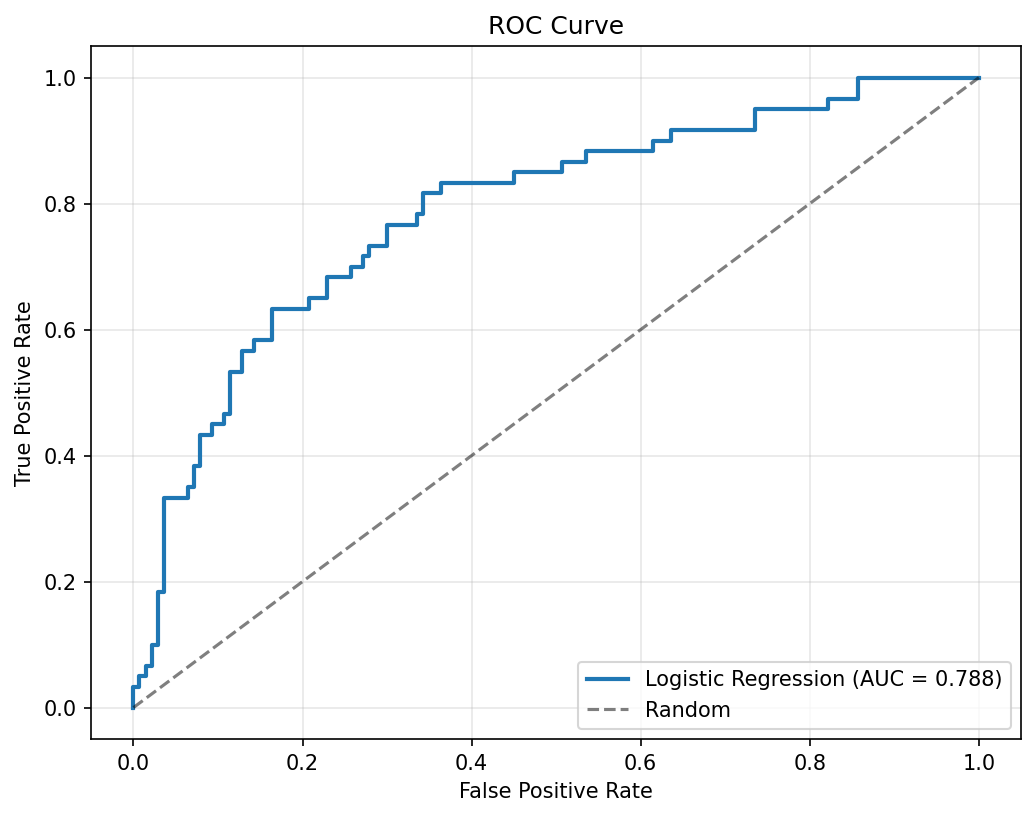

End-to-end ML pipeline predicting loan defaults with SHAP explainability and 0.788 AUC.

View on GitHubResults

Key Metrics

0.788

Best AUC Score

67

Engineered Features

3

Models Compared

SMOTE

Class Imbalance Method

Approach

Technical Overview

Dataset & Preprocessing

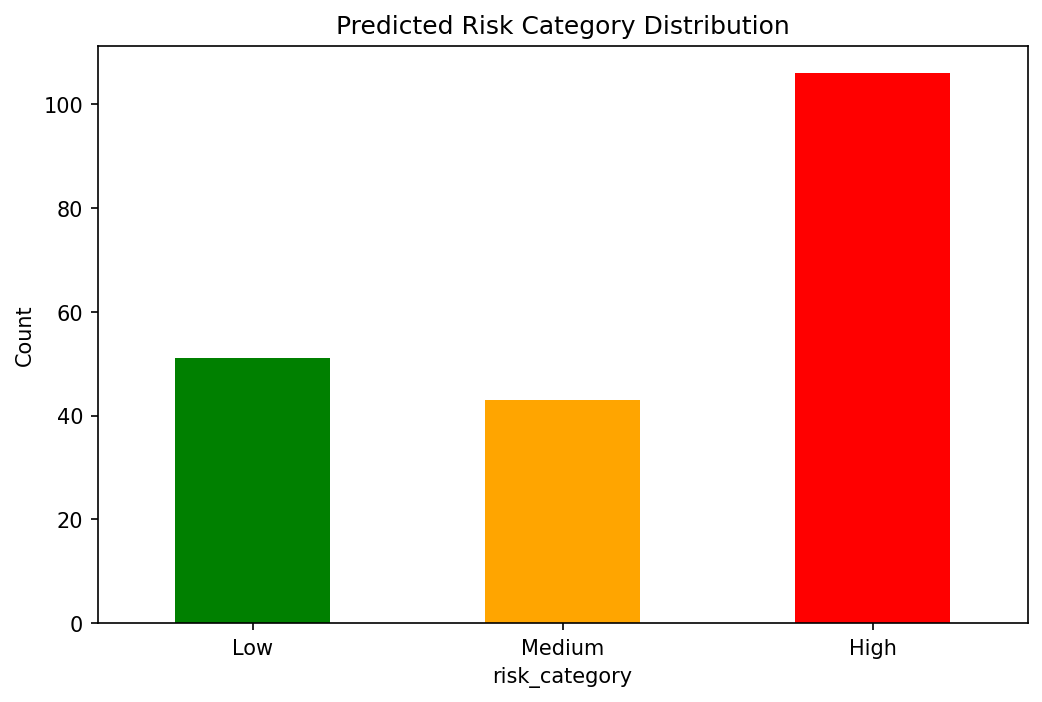

The German Credit Dataset presents a realistic class imbalance — a common challenge in credit scoring. SMOTE (Synthetic Minority Oversampling Technique) was applied to the training set to ensure the model learned meaningful default patterns rather than simply predicting the majority class.

Feature Engineering

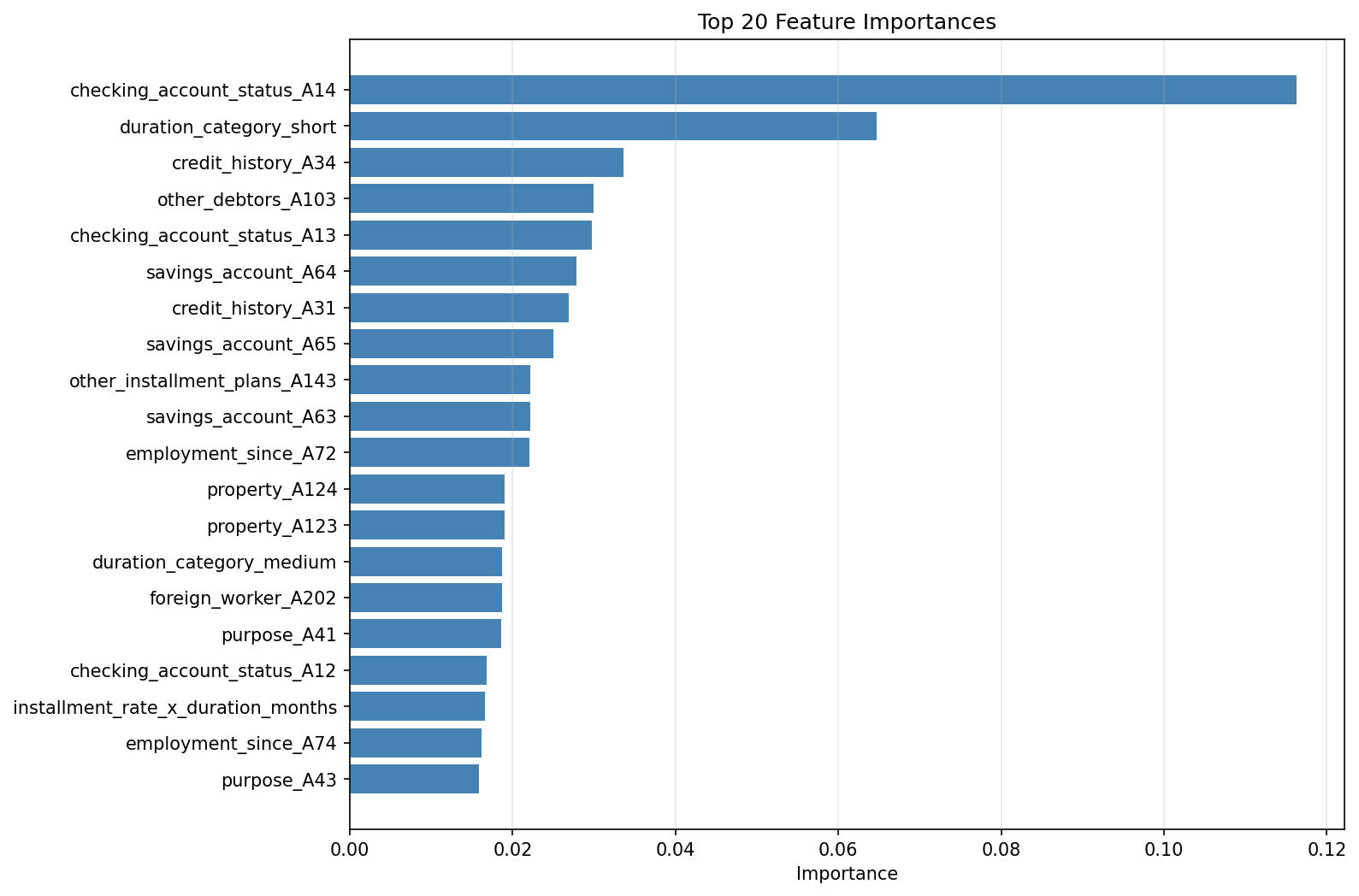

Raw features were expanded from 20 to 67 through interaction terms, polynomial features, and domain-driven transformations. This expansion gave tree-based models additional signal while the logistic regression benefited from the interaction terms directly.

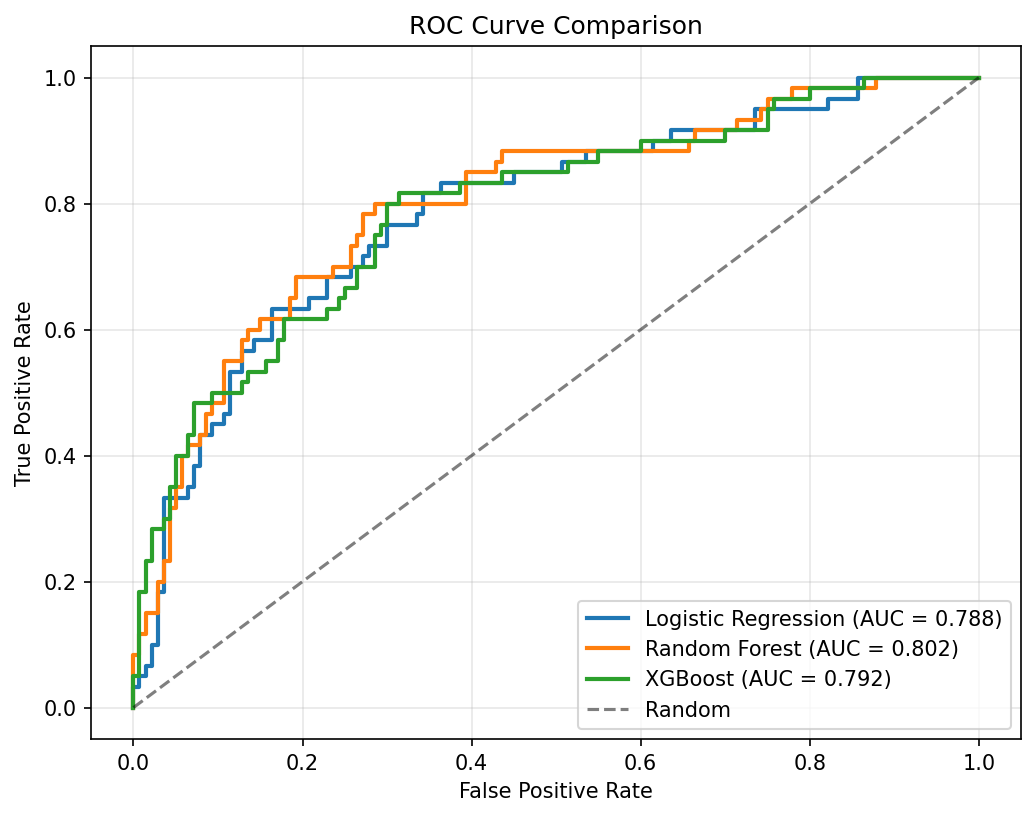

Model Comparison

Three classifiers were trained and evaluated — Logistic Regression, Random Forest, and XGBoost — each with cross-validated hyperparameter tuning. Logistic Regression achieved the best generalization at 0.788 AUC, outperforming the more complex tree ensembles on this dataset size.

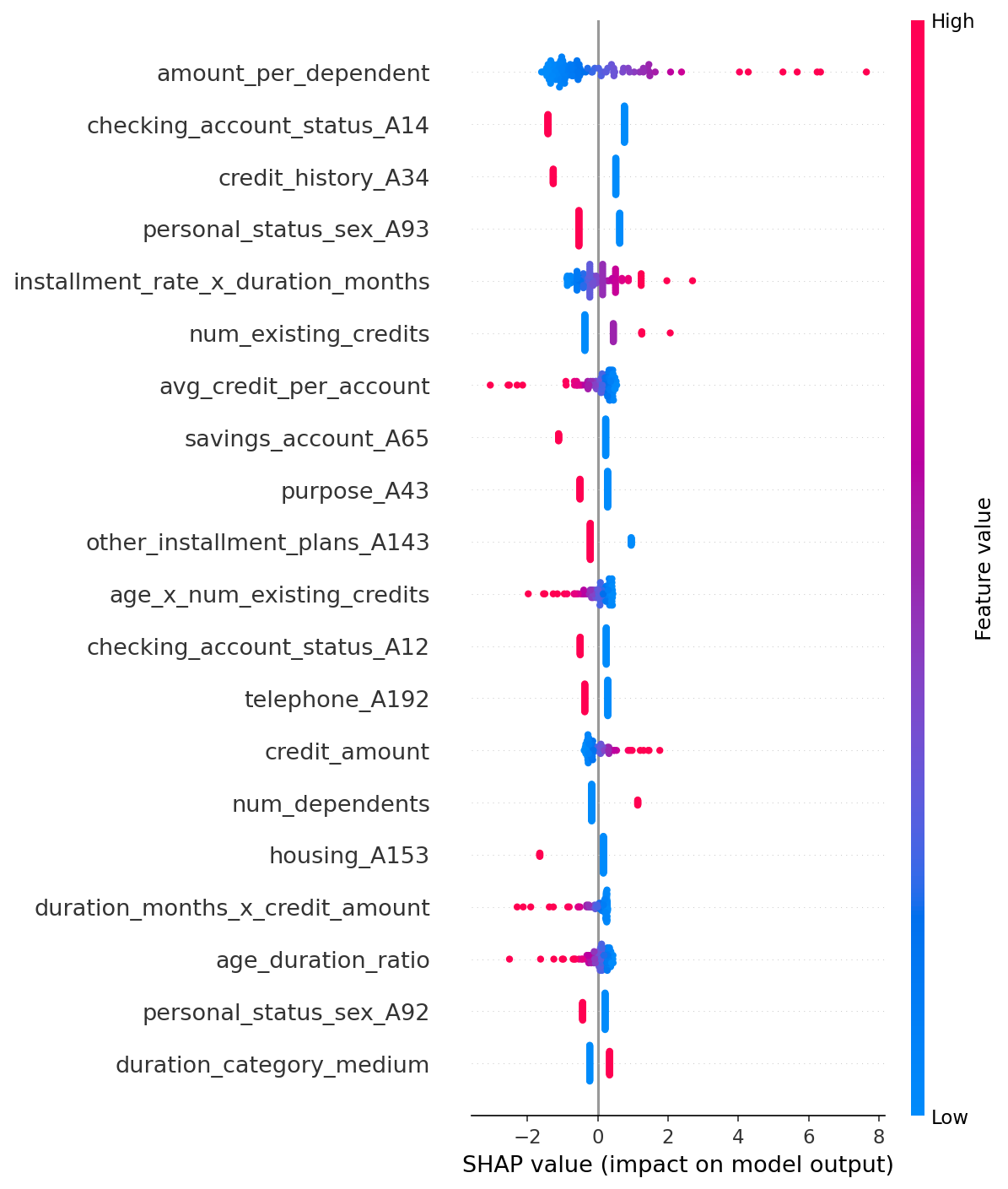

Explainability with SHAP

SHAP (SHapley Additive exPlanations) values were computed for the best model to produce both global feature importance rankings and individual prediction explanations. In credit risk contexts, model interpretability is not optional — regulators require lenders to justify adverse decisions. SHAP provides that audit trail.

Gallery

Output & Visualizations

ROC Curves — Logistic Regression vs. Random Forest vs. XGBoost

SHAP Summary Plot — Global feature importance across all predictions

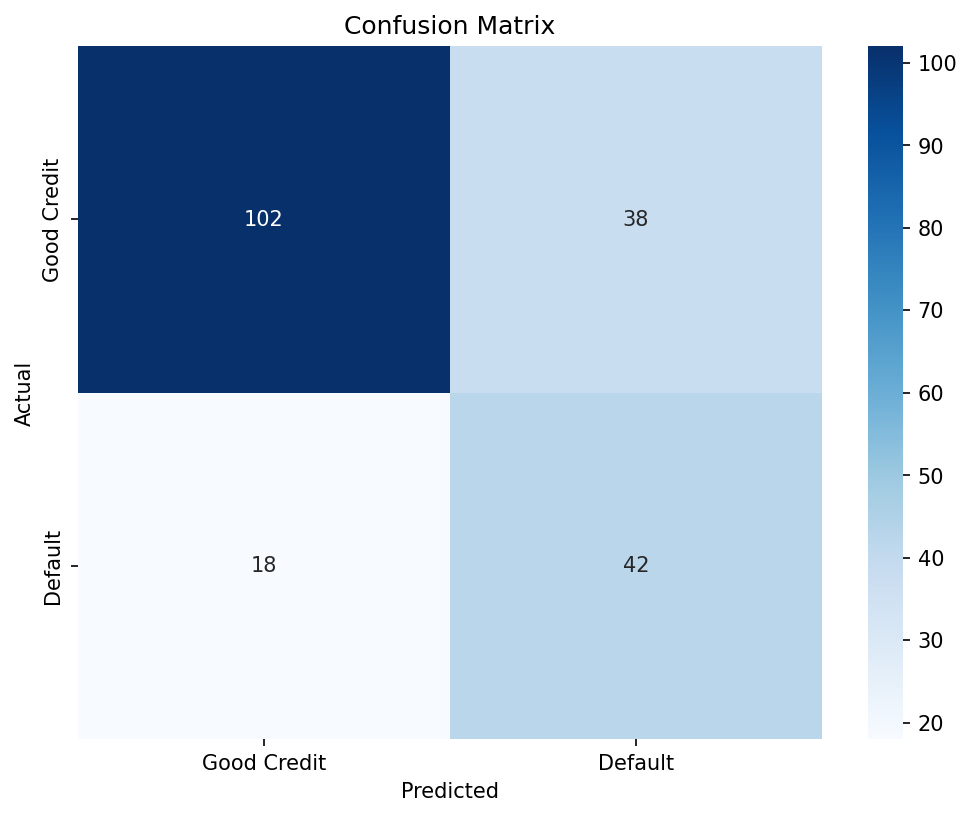

Confusion Matrix — Best model classification results on holdout set

Model Comparison — AUC and F1 across all three classifiers

Feature Importance — Top features driving default predictions

Risk Distribution — Predicted probability distribution by outcome

Stack